-

どのようにルーティング?

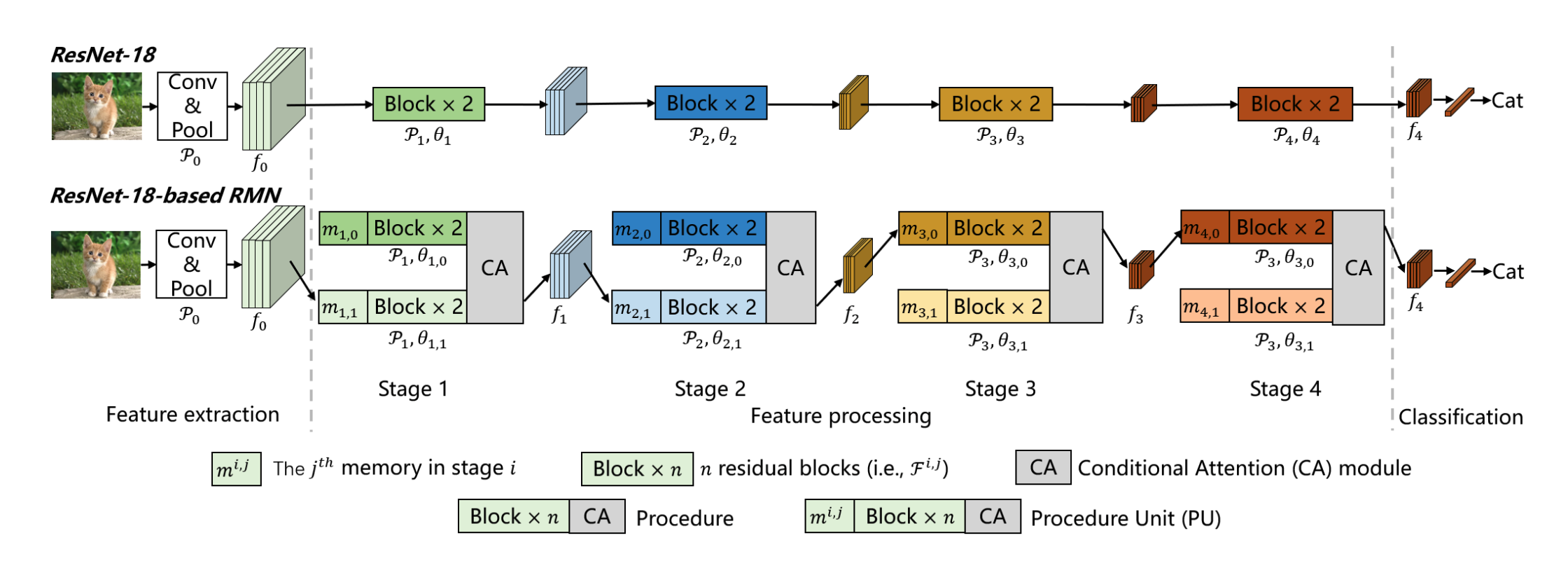

- 特徴量 $f$ をGlobal Average Poolingに通したものとメモリ ${\boldsymbol{m}}$とで近傍探索 (論文中ではユークリッド距離)

- メモリは各ブロックの先頭に配置

-

メモリはどう初期化するの?

-

パラメタ数が爆増してる気がするけど….?

-

Ablation的にCAはそこまで性能に寄与していないっぽい

- そもそもCAはoptionalっぽい

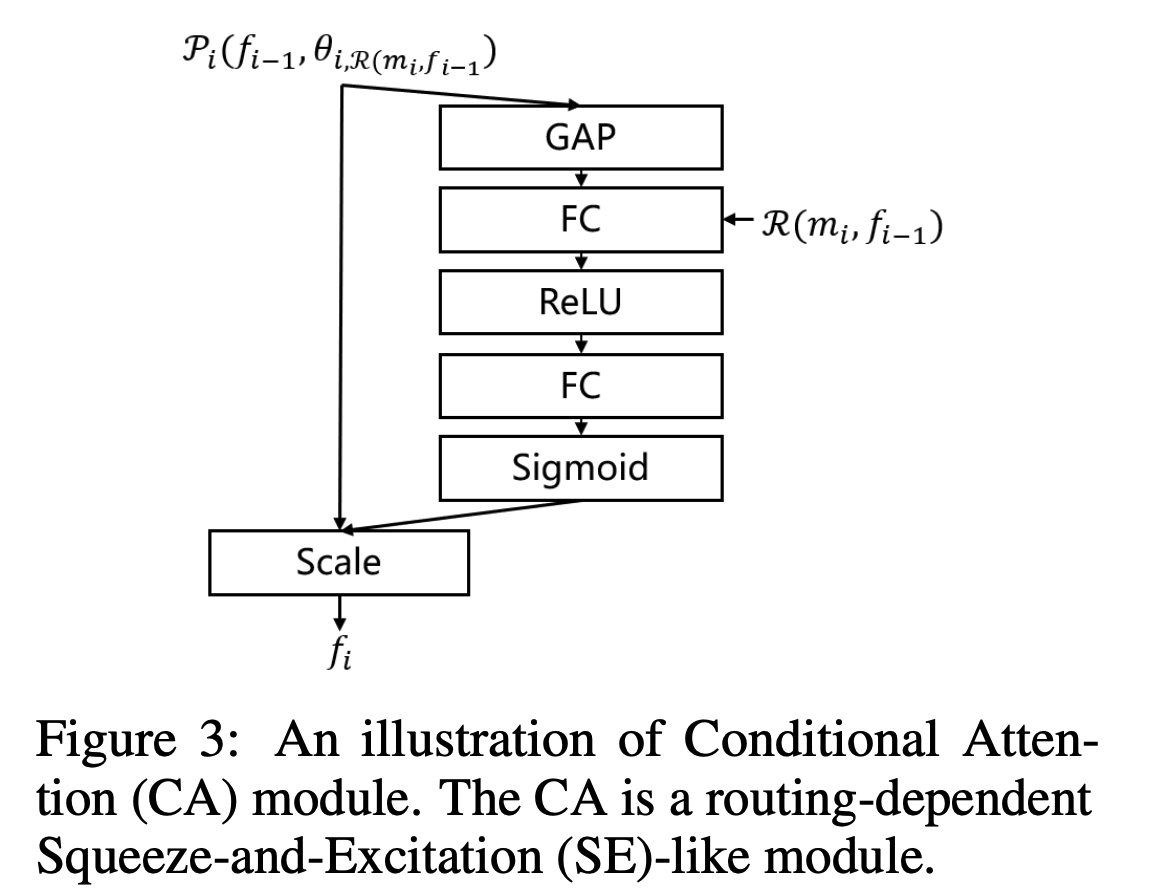

- CA = Conditional Attention

We then introduce the CA module. The same channels of features produced from different procedural units may represent different semantic meanings. For example, the first convolution kernel of a procedure may focus on animals’ fur. However, the first convolution kernel of another procedure may focus on furniture’s texture. The inconsistent semantic meaning of different features increases the learning difficultly. Inspired by the position-coding in ViT, we introduce the CA module to do routing-dependent channel-wise attention to the features, relieving the inconsistency adaptively

- 要は特徴マップにattentionを入れてるイメージ

- CAブロックはSqueeze-and-Excitationっぽく最初にGlobal Average Pooling